Also published in AirMed&Rescue, Nov 2021 edition..

Automation reduces workload, frees attentional resources to focus on other tasks, and is capable of flying the aircraft more accurately than any of us. It is simultaneously a terrible master that exposes many human limitations and appeals to many human weaknesses.

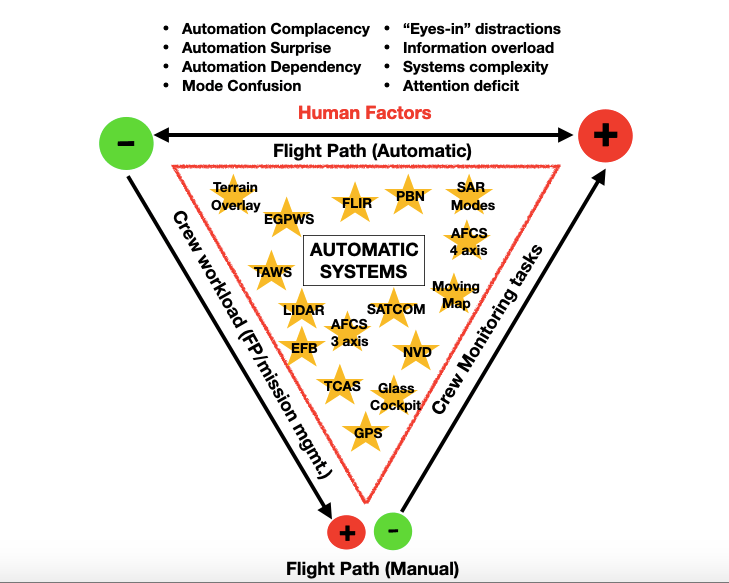

As we have bid to reduce crew workload across many different tasks and increase situational awareness with tools including GPS navigation on moving maps, synthetic terrain displays, and ground proximity warning systems, we have also opened a Pandora’s Box of human factors to bring us back down to the ground with a bump. Sometimes literally. These include powerful forces such as technology-driven complacency and the ever-growing internal distractions of the modern cockpit. In changing the pilot’s workload to monitoring and evaluating multiple systems, are we utilizing automation in a way that best meets our human limitations?

Calculation methods of old

One of my first SAR missions as a junior co-pilot in the UK Royal Navy had the crew heading out at night to a position over two hundred miles west of Lands’ End, the most westerly point of Britain and just at the limits of our range. I distinctly remember my nervous laugh as the flight navigator asked me to check his calculations of range, endurance, and point of no return, which was worked out using his whizz wheel flight computer – otherwise known back then as the Dalton Confuser, a version of which will be more familiar as the CRP5.

The Mk5 Sea King had changed little since its entry into service in the mid-20th Century, and the Navy has resisted most of the bolt-on technology that might have made life easier for pilots. Even back in 2009, the aircraft had no radio navaids, no GPS, no FLIR, no TCAS, no TAWS, and no satphone. It only had a basic AFCS with radio altimeter and barometric height hold. Raw radar information was interpreted using acetate overlays by the navigator and could not be displayed to the pilots visually. No instrument approaches were available other than talk down. We navigated with paper maps and charts, Second World War-era flight computers, and aeronautical information books.

This will sound familiar to rotary-wing pilots of a certain age, but this was only 12 years ago. My cohort of military SAR pilots are the last to experience this type of largely unaided flying and mission-management. In the decade that followed, a generational change of aircraft, a huge shift in risk management culture, and a technological explosion in aides to pilot workload and situational awareness combined to roll out a step-change in automatic flight control, and other game-changing technologies to helicopter cockpits.

It is as if I have experienced aviation time travel within such a brief period. Certainly, all these modern systems and aides have made life easier. But can we state definitively that they have made our operations safer?

Automate to safe flight

One approach to answering this question is a statistical analysis of accidents and incidents over the period in question. I delved into the data from EASA’s annual safety reports and, to keep things as simple, relevant, and as easily comparable as possible, I chose to focus on Commercial Air Transport operations (CAT). CAT is a strong category to focus on because it includes many special missions such as HEMS and SAR as well as offshore operations. These tend to be larger, most modern aircraft that are most likely to be equipped with the technological upgrades to increase capability and reduce crew workload.

Figure 1 is a compilation of data from EASA Safety Reviews covering 2009-2019. This period not only represents a period of rapid technological change in aviation, and particularly the rotary-wing sector, but also correlates with my own technological transition, from the Sea King SAR mission in 2009 to my return to SAR in 2019, flying the contemporary AW139. The graph displays the total number of accidents and serious incidents for helicopters of EASA Member States engaged in CAT during that period.

We might pose the reasonable hypothesis that during a decade of rapid technological progress resulting in both radical reductions to crew workload and gains in situational awareness and mission management capacity, we would see a corresponding increase in safety. But this hypothesis is not borne out by the accident rate – one of the best metrics we have for evaluating safety outcomes where they most matter. The trend line (drawn in light blue) doesn’t show the decrease we would expect. In fact, it isn’t even flat.

Despite CAT operations being at the vanguard of both the generational change of helicopters and deployment of cutting-edge technology touted as bringing game changing benefits to flight safety, we are still getting ourselves into trouble in the air at the same rate as in the years we were flying mostly manually. This is particularly the case in HEMS operations across Europe, which stand out as an operation type which has consistently (2009-2019) had an average mishap rate (4.2pa) of nearly twice that of the next most-reported group in CAT, and which rose notably in 2018 & 2019 with 12 and seven accidents/serious incidents respectively.

The reasons behind this are complex and multifaceted, but at least part of it lies in our relationship with all this automation.

The expanding human factor

No one can question the huge advance in capability that all this technology brings – one of which is the reduction of pilot workload. Thanks to GPS, we don’t have to worry too much about where we are anymore, or where we’re going. We can see that represented in any number of deliberately ergonomic formats in front of us. Thanks to TCAS, we know if there is traffic around and where to look for it. It even tells us how to avoid it. PBN provides us with configurable instrument approaches to points in space. With FLIR and NVG, we can see and search in the dark and the wet, increasing capabilities immeasurably. This list is far from exhaustive.

But while workload has undeniably reduced in many areas, it has also changed. A bit like squeezing a stress ball, we have compressed some areas, only to find it has ballooned out in others. The need for us to manage all this new technology and automation has produced additional tasks on the crew that didn’t exist before. For years now, we have increasingly added avionics and mission systems to the aircraft, and much of the pilot’s new workload is based in monitoring and evaluating feedback from multiple systems.

The great irony is that the task of monitoring the output of these systems is not one that matches particularly well with the capabilities of the human brain. We hit up against two key human limitations: the first being our brain’s ability to focus, select, and sustain attention. When we monitor multiple sources of information, we are applying selective attention, with greater attention being given to the one or more sources that are deemed most important. This applies to most flying tasks. Distraction is the negative side of selective attention.

The second is our capacity to process large quantities of information and handle extreme complexity. The ergonomics and tools of the modern cockpit mean much more information is available and presented, and this is not just a problem of deciding what to take in. As the systems with which we work become cleverer and more complex, we reach the boundaries of what the average person can understand about the design and architecture of the systems they are operating, preventing us from holding accurate mental models of them to fall back on for fault diagnosis or when things start to get confusing. A 2013 study by the FAA – Operational Use of Flight Path Management Systems – found that errors related to the operation and monitoring of automated systems contributed to over 30 per cent of incidents they reviewed, concluding that they were down to an inaccurate understanding of how these systems worked.

Aviation is a system of systems, and one becoming ever more complex. Aviation professionals have become exceptional at finding ways to improve the reliability, serviceability, and capability of all the systems around us.

Harness strength, restrain weaknesses.

The burgeoning of automation is a great example of this. But the human still sits in the middle of this system and the human’s failings have remained, by and large, the same. The current evolution of technology in aviation is resulting in the scenario where the human in the system is ever more frequently the point of failure.

But this perspective neglects to acknowledge the key role that the human can play in a system to step in and prevent things that might otherwise go wrong. We are starting to acknowledge that human performance also contributes significantly to the overall safety performance of the aviation system. The International Civil Aviation Organization’s latest Manual on Human Performance recognizes that ‘within a complex system, it is the human contribution that often provides the important safety barriers and sources of recovery’.

The tension between these two different approaches to understanding and reconciling the human contribution can often lead to emotionally charged discussion, particularly with respect to highly automated environments like the modern cockpit. But the reality is that they are two sides of the same human coin. Have we been slow to realize the significance of investing proportionately to the impact of the human contribution, regardless of whichever side of this coin it represents?

Because the human risk profile is growing, you would expect this to be reflected in safety and risk management strategies; we have learned to manage the fallibility of engines, airframes, and components through cycles, periodic maintenance, preventative maintenance, fault rectification, refurbishment, and all sorts of other techniques, to achieve extremely high levels of reliability. We have not yet achieved the same with the human element. Operators, manufacturers, and regulators expend huge energy and effort creating rules, structures, systems to manage design and operational risk, but often relegate the human dimension within this risk management to a less significant position than it truly represents. At the end of the day, when the investigators are picking through the smoking pieces of an accident site, it is not the aircraft that is the asset, but the people.

In automation, we have a technology that supposedly improves or removes human capability, so it seems a paradox that the human factor has grown with it. Automation does not overcome human failings, it just shifts them around. There is also an irony in acknowledging that, while reducing the human role in skills-based tasks, automation has nevertheless taught us the continuing importance of human contribution. It is not a paradox to state that the human is both the strongest barrier and the weakest link in the safety chain. It is likely that we always will be. Future safety outcomes will depend ever more on how much time we dedicate to understanding this, and how we choose to balance these opposing forces.