Celebrating one year of H2F Bitesize. I reflect on what 52 weeks of helicopter human factors posts looks like and what I have taken from them. I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words.

Category Archives: Human Factors

H2F BITESIZE #52

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Startle and surprise in helicopter operations: Reported prevalence and application of mitigation strategies.

H2F BITESIZE #51

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Supporting the investigation of language and other communication factors.

H2F BITESIZE #50

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Essential attributes for successful military student pilots: A focus group study.

H2F BITESIZE #49

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: The effects of Crew Resource Management on Flight Safety Culture: Corporate Crew Resource Management (CRM 7.0)

H2F BITESIZE #48

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Crew Resource Management for Automated Teammates (CRM-A).

H2F BITESIZE #47

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Principles for intelligent assistant systems in future flight deck design: autonomous action integration to reduce pilot workload

H2F BITESIZE #46

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Understanding pilots’ perceptions of AI-mediated mental health support in aviation: a socio-technical framework.

H2F BITESIZE #45

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Safety at high altitude: The importance of emotional dysregulation on pilots’ risk attitudes during flight.

H2F BITESIZE #44

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: On error classification from physiological signals in an airborne environment.

H2F BITESIZE #43

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Organizational pressure and pilot decision-making in adverse weather: A naturalistic decision-making analysis of helicopter accidents.

H2F BITESIZE #42

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Effects of unexpected event urgency and flight scenario familiarity on pilot trainees’ performance and stress responses.

H2F BITESIZE #41

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Integrating digital competency into aviation training: an auditable Inputs–Processes–Outcomes framework

H2F BITESIZE #40

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Machine learning methods for cognitive load analysis and classification in aviation.

H2F BITESIZE #39

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Non-technical skills in the civil aviation sector.

H2F BITESIZE #38

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: The first fatal Helicopter Emergency Medical Services crash in Turkey: Weather, human factors, and lessons learned.

H2F BITESIZE #37

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Wellbeing of Helicopter Emergency Medical Services Personnel in a Challenging Work Context: A Qualitative Study

H2F BITESIZE #36

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Effectiveness of a new basic course incorporating a medical trainer simulator for HEMS education in Japan: A pre–post intervention study.

H2F BITESIZE #35

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Using SHERPA to predict human error on the maritime SAR helicopter hoist task.

H2F BITESIZE #34

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Enhancing aviation safety with artificial intelligence: A systematic literature review on recent advances, challenges and future perspectives.

H2F BITESIZE #33

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: The potential of flight simulation to support pilot training for mountain helicopter emergency medical services.

H2F BITESIZE #32

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Quantifying the impact of spatial disorientation on pilot mental workload and attentional focus.

H2F BITESIZE #31

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Helicopter pilots encountering fog: an analysis of 109 accidents from 1992 to 2016.

H2F BITESIZE #30

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Incidence and challenges of helicopter emergency medical service (HEMS) rescue missions with helicopter hoist operations: analysis of 11,228 daytime and nighttime missions in Switzerland.

H2F BITESIZE #29

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Effects of acute stress on aircrew performance: Literature review and analysis of operational aspects´

H2F BITESIZE #28

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Workload in helicopter rescue operations – A comparison of two different rescue methods in a randomised cross-over design.

H2F BITESIZE #27

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Crew Resource Management: What aviation can learn from the application of CRM in other domains

H2F BITESIZE #26

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Pilot see, pilot do: Examining the predictors of pilots’ risk management behaviour

H2F BITESIZE #25

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Emotions-based training: Enhancing aviation performance through self-awareness and mental preparation, coping with stress and emotions.

H2F BITESIZE #24

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Hero or Hazard: A systematic review of individual differences linked with reduced accident involvement and influencing success during emergencies.

H2F BITESIZE #23

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Exploring the role of pilot attributes and skills in response to in-flight emergencies.

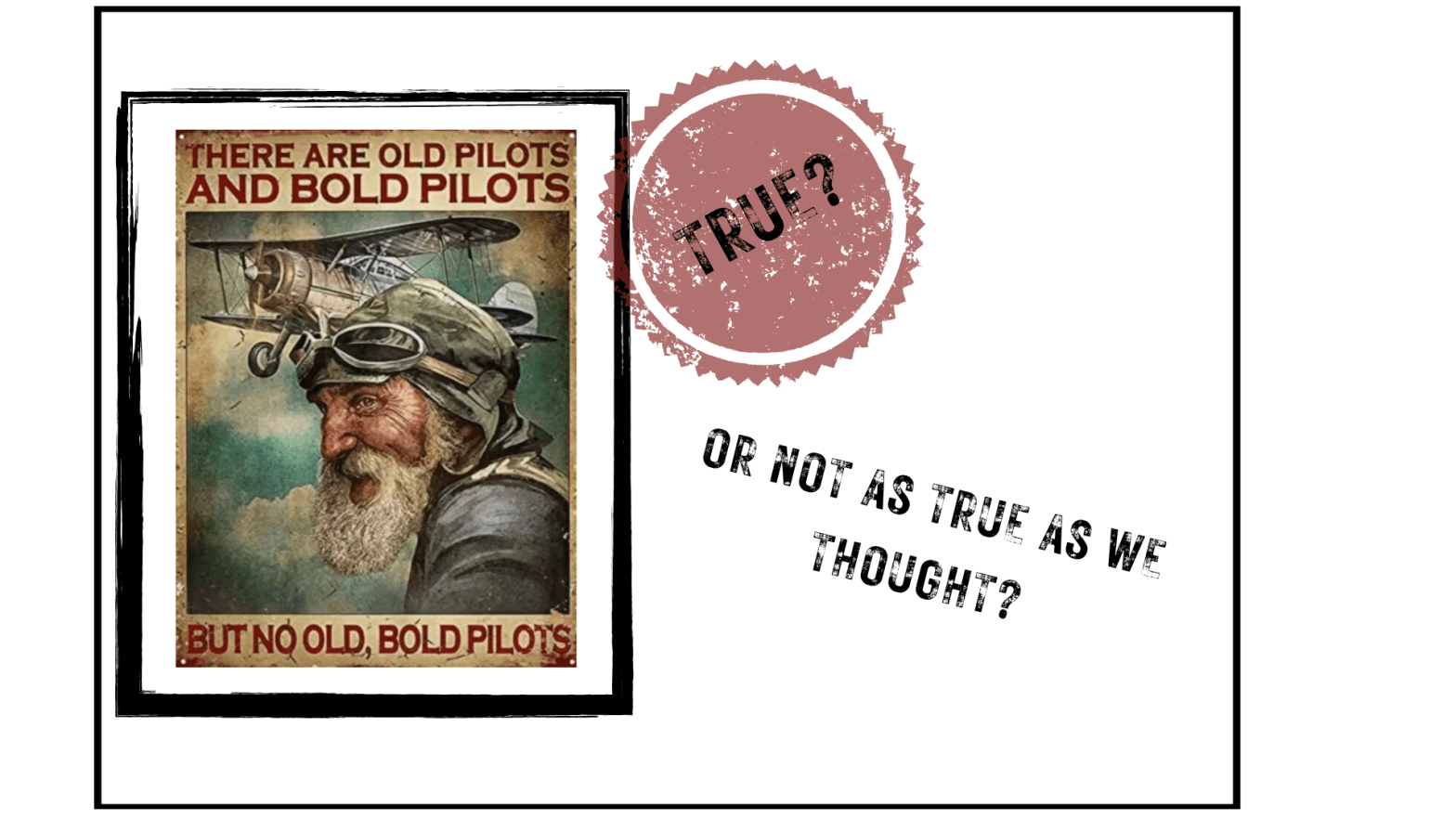

Experience ≠ Judgement:

What one well conceived study tells us about how experience interacts with risk-taking and doesn’t always align with competence. Do traditionally used indicators of pilot competence, such as age, total flight hours, and recent flying experience, actually predict sound risk management behaviour?

H2F BITESIZE #22

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: The Virtual Landing Pad: Facilitating rotary-wing landing operations in Degraded Visual Environments (DVE).

H2F BITESIZE #21

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Safety in high-risk helicopter operations: The role of additional crew in accident prevention.

H2F BITESIZE #20

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Resilience and brittleness in the offshore helicopter transportation system

H2F BITESIZE #19

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: The potential of technologies to mitigate helicopter accident factors

H2F BITESIZE #18

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Impact of adverse weather on commercial helicopter pilot decision-making and standard operating procedures.

H2F BITESIZE #17

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: The role of native English speakers in safe, efficient radiotelephony

H2F BITESIZE #16

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Distributed Cognition in Search and Rescue: Loosely coupled tasks and tightly coupled roles.

H2F BITESIZE #15

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Pilot Monitoring: Summary of Research and Applied Training Tools

Landing blind: from battlefield brownout to the civil cockpit

Technology may one day give us perfect vision through dust and snow. Until then, or as long as helicopters land on unprepared surfaces, brownout will remain a hazard in rotary-wing operations. Civil pilots cannot avoid it entirely, but they can manage it intelligently: Why discipline, teamwork, and training still trump technology in brownout

H2F BITESIZE #14

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: The role of shared mental models in team coordination CRM skills of mutual performance monitoring and backup behaviors.

H2F BITESIZE #13

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Effects of Hydration on Cognitive Function of Pilots.

H2F BITESIZE #12

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Differences in physical workload between military helicopter pilots and cabin (technical) crew.

H2F BITESIZE #11

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Investigating Offshore Helicopter Pilots’ Cognitive Load and Physiological Responses during Simulated In-Flight Emergencies

H2F BITESIZE #10

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Factors affecting safety during night visual approach segments for offshore helicopters.

H2F BITESIZE #9

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Is It All about the Mission? Comparing Non-technical Skills across Offshore Transport and Search and Rescue Helicopter Pilots.

H2F BITESIZE #8

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Helicopter pilot performance and workload in a following task in a degraded visual environment

H2F BITESIZE #7

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Addressing Differences in Safety Influencing Factors – A Comparison of Offshore and Onshore Helicopter Operations

H2F BITESIZE #6

I bring you a weekly bite-sized chunk of the science behind helicopter human factors and CRM in practice, simplifying the complex and distilling a helicopter related study into a summary of less than 500 words. This week: Human Factors in Helicopter Air Ambulance Accidents, Incidents, and Safety Reports