Memory and meaning

I cdnuol’t blveiee taht I cluod aulaclty uesdnatnrd waht I was rdanieg. The phaonmneal pweor of the hmuan mnid. Aocdcrnig to rscheearch at Cmadrigbe Uinervtisy, it deosn’t mttaer in waht odrer the ltteers in a wrod are, the olny iprmoatnt thnig is taht the frist and lsat ltteer be in the rghit pclae! Amzanig, huh?

1N 7H3 B3G1NN1NG 17 WA5 H4RD BU7 N0W , oN 7H15 LIN3, YoUR M1ND 1S R34D1NG 17 4U7oM471C4LLY W17HoUT 3V3N 7H1NK1NG 4BoU7 17. B3 PRoUD! oNLY C3R741N P3oPL3 C4N R3AD 7H1S!

The two paragraphs above demonstrate very effectively how the brain uses memory and prior experience to build understanding. An English speaking child in the early stages of learning to read would probably struggle with this task. Yet, a reasonably fluent speaker of English as a foreign language would probably decipher it with a little more time and effort, but nevertheless do so quite happily. This is because our perceptual system interpolates and reconstructs. The brain ‘fills’ in words by comparing prior experience from the long term memory store, so it is more dependent upon vocabulary and a similar lexicon – albeit a foreign word – than anything else. If we know or understand the general meaning of the target text, we will even read over some passages that do not exist at all, or in this case, we fill the gaps through our knowledge.

For those of us in aviation who are familiar with the sometime challenges of piecing together a broken transmission on the radio, we have experienced the same process in play, this time in the aural sense. Radio messages that are incomplete, or difficult to hear, are often understood perfectly by the experienced pilot, when a passenger given the opportunity to listen in would be stumped. As with the text above, we look to match what we actually hear to a template of what is familiar or has been heard before. We change meaning towards what we expect. This is where there is no substitute for experience.

Human Perception

The principal task played by human perception is to strengthen the sensory stimuli entering the body through the ears, eyes, nose, and tactile receptors, to allow us to perceive, orientate, and then act quickly and efficiently. This is certainly how we want the brain to act for us in the unpredictable and fast-moving world of aviation.

How does visual perception work?

Visual processing is composed of 3 different stages (Marr, 1982) These are early, intermediate, and late vision. In the early stage, basic processes like segregation of figure from background, border detection, and the detection of basic features (e.g., color, orientation, motion components) occur. The intermediate combines this information into a temporary representation of an object. At the later stage, the temporary object representation is matched with previous object shapes stored in long-term visual memory to achieve visual object identification and recognition.

The content of memory then directly influences how the stimulus is perceived. Therefore observers tend to perceive the world in accordance with their expectations. For example, research has demonstrated that a yellow–orange hue is more likely to be categorized as orange on a carrot than on a banana (Mitterer & de Reuter, 2008) and that a face is perceived to be lighter if it contains prototypical White features rather than Black ones (Levin & Banaji, 2006).

We will focus here on visual perception, as not only is it the key sense feeding the brain with stimuli in the cockpit environment, (about 80 per cent of our total information intake is through the eyes), but sensory perception is often the most striking proof of something factual and therefore the most difficult to force yourself to overcome when what you are seeing doesn’t seem to make sense to you. Any pilot who has experienced a real-life case of ‘the leans’ will attest to this. When we perceive something, we interpret it and take it as “objective”, or “real”. The assumed link between perception and physical reality is particularly strong for the visual sense. Seeing is believing, right?

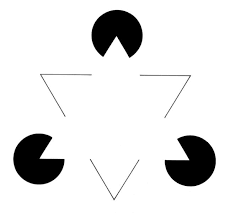

The Kanizsa Triangle is a famous example of how the brain draws upon its experience of familiar known images, to build its perception of an ‘object’.

A white triangle is perceived where none is drawn. This is called a subjective or illusory contour. We not only perceive two triangles, but we even interpret the whole configuration as one with clear depth, with the solid white “triangle” in the foreground of another “triangle” which stands bottom up.

Taking this concept from the academic study into the cockpit is a small step as the links are easily made with well known visual illusions that can present significant hazards to the unsuspecting pilot. In the low-flying world of the helicopter where the boundaries between VMC and IMC are small, and movements between the two often frequent, these hazards are both heightened, as well as more likely to occur.

The False Visual Reference

The false visual reference is a function of the human system of perception described above.

False visual reference illusions may cause the pilot to orientate the aircraft in relation to a false horizon caused by flying over a banked cloud, night flying over featureless terrain with ground lights that are indistinguishable from a dark sky with stars, or night flying over a featureless terrain with a clearly defined pattern of ground lights and a dark, starless sky.

Other well known visual illusions caused by the brain making an erroneous comparison with a known sight-picture include; linear perspective illusions (up-sloping/down-sloping runways, or unusually wide/thin, long/short runways); the black hole approach; or auto-kinesis, all of which are caused by a failure of the brain to interpret the visual stimuli in the correct manner.

The effect of a possible visual illusion was cited as a major factor in the accident of the AS365 Dauphin that crashed into the sea in Morecambe Bay on a dark night in 2006. The cockpit voice recorder prior to the ditching captured an extremely experienced off-shore helicopter crew struggling with their perception of the oil rig to which they were attempting to make an approach.

For the full accident report read here: G-BLUN Accident Report

Limitations of Human Perception

Apart from these inbuilt biases fed by experience, perception is limited even further by the capabilities of the human information processing system. This is best illustrated by our acoustic sense.

The adult human can only register and process a very narrow band of frequencies ranging from about 16 Hz–20 kHz as a young adult, and this band gets narrower and narrower with increasing age. Typically, infrasonic and ultrasonic bands are just not perceivable despite being essential for other species such as elephants and bats, respectively. The perception of the environment and, consequently, the perception and representation of the world around them is, as such, significantly different for these species to what it is for us.

Let’s look at a well-known example of the limitations of the human processing mechanism, and how it could affect us in the cockpit.

(Note: If you are struggling with this, try to fixate at a distance of approximately 40cm and move your head slightly horizontally from right to left as you move the page towards you.)

The object disappears when it moves in to the area of the retina where visual information can’t be processed due to a lack of photoreceptors.

This has obvious implications on pilot lookout and the avoidance of mid-air collisions. Mid-air collision has become an increasing area of concern for aviation authorities worldwide in the past few years.

A study of over two hundred reports of mid-air collisions in the US and Canada showed that they can occur in all phases of flight and at all altitudes. However, nearly all mid-air collisions occur in daylight and in excellent visual meteorological conditions, mostly at lower altitudes where most VFR flying is carried out, and because of the concentration of aircraft close to aerodromes, most collisions occurred near aerodromes when one or both aircraft were descending or climbing, and often within the circuit pattern.

All of this was the case in the mid air in November 2017 near Wycombe Air Park between a Cessna 152 and a Guimbal Cabri G-2 helicopter.

Despite the fact that in recent years a lot of the focus on combatting the hazard of mid-air collision has been through technological advances such as Mode Sierra, TAS and TCAS, the need to continue to educate pilots on effective lookout remains.

In 2013, the UK CAA published a SafetySense information leaflet (Read more: Safety Sense Leaflet – Collision Avoidance) which looks in more depth at the limitations of human vision with respect to effective lookout.